Why precision luminosity measurements matter

The ATLAS and CMS experiments at the Large Hadron Collider (LHC) have performed luminosity measurements with spectacular precision. A recent physics briefing from CMS complements earlier ATLAS results and shows that, by combining multiple methods, both experiments have reached a precision better than 2%. For physics analyses – such as searches for new particles, rare processes or measurements of the properties of known particles – it is important not only for accelerators to increase luminosity, but also for physicists to understand it with the best possible precision.

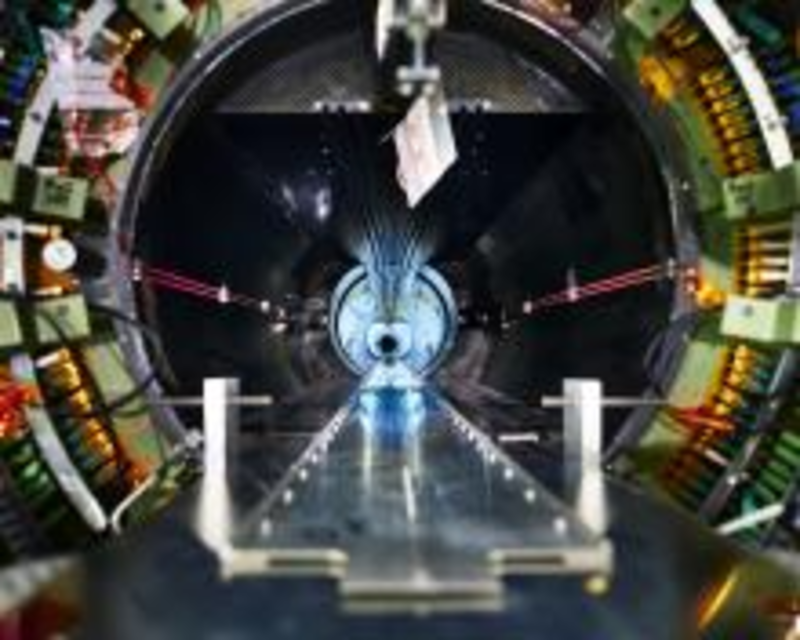

Luminosity is one of the fundamental parameters to measure an accelerator’s performance. In the LHC, the circulating beams of protons are not continuous beams but are grouped into packets, or “bunches”, of about 100 billion protons. These bunches collide with oncoming bunches 40 million times per second at the interaction points within particle detectors. But when two such bunches pass through each other, only a few protons from each bunch end up interacting with the protons circulating in the opposite direction. Luminosity is a measure of the number of these interactions. The two main aspects of luminosity are instantaneous luminosity, describing the number of collisions happening in a unit of time (for example, every second), and integrated luminosity, measuring the total number of collisions produced over a period of time.

Integrated luminosity is usually expressed in units of “inverse femtobarns” (fb-1). A femtobarn is a unit of cross-section, a measure of the probability for a process to occur in a particle interaction. This is best illustrated with an example: the total cross-section for Higgs boson production in proton–proton collisions at 13 TeV at the LHC is of the order of 6000 fb. This means that every time the LHC delivers 1 fb-1 of integrated luminosity, about 6000 fb x 1 fb-1 = 6000 Higgs bosons are produced.

Knowing the integrated luminosity allows physicists to compare observations with theoretical predictions and simulations. For example, physicists can look for dark matter particles that escape collisions undetected by looking at the energies and momenta of all particles produced in a collision. If there is an imbalance, it could be caused by an undetected, potentially dark matter, particle carrying energy away. This is a powerful method of searching for a large class of new phenomena, but it has to take into account many effects, such as neutrinos produced in the collisions. Neutrinos also escape undetected and leave an energy imbalance, so in principle, they are indistinguishable from the new phenomena. To see if something unexpected has been produced, physicists have to look at the numbers.

If 11 000 events show an energy imbalance, and the simulations predict 10 000 events containing neutrinos, this could be significant. But if physicists only know luminosity with a precision of 10%, they could have easily had 11 000 neutrino events, but there were just 10% more collisions than assumed. Clearly, a precise determination of luminosity is critical.

There are also types of analyses that depend much less on absolute knowledge of the number of collisions. For example, in measurements of ratios of different particle decays, such as the recent LHCb measurement. Here, uncertainties in luminosity get cancelled out in the ratio calculations. Other searches for new particles look for peaks in mass distribution and so rely more on the shape of the observed distribution and less on the absolute number of events. But these also need to know the luminosity for any kind of interpretation of the results.

Ultimately, the greater the precision of the luminosity measurement, the more physicists can understand their observations and delve into hidden corners beyond our current knowledge.